RAG Chatbot

RAG chatbot agent using Pinecone, openai/ollama for document Q&A.

Overview

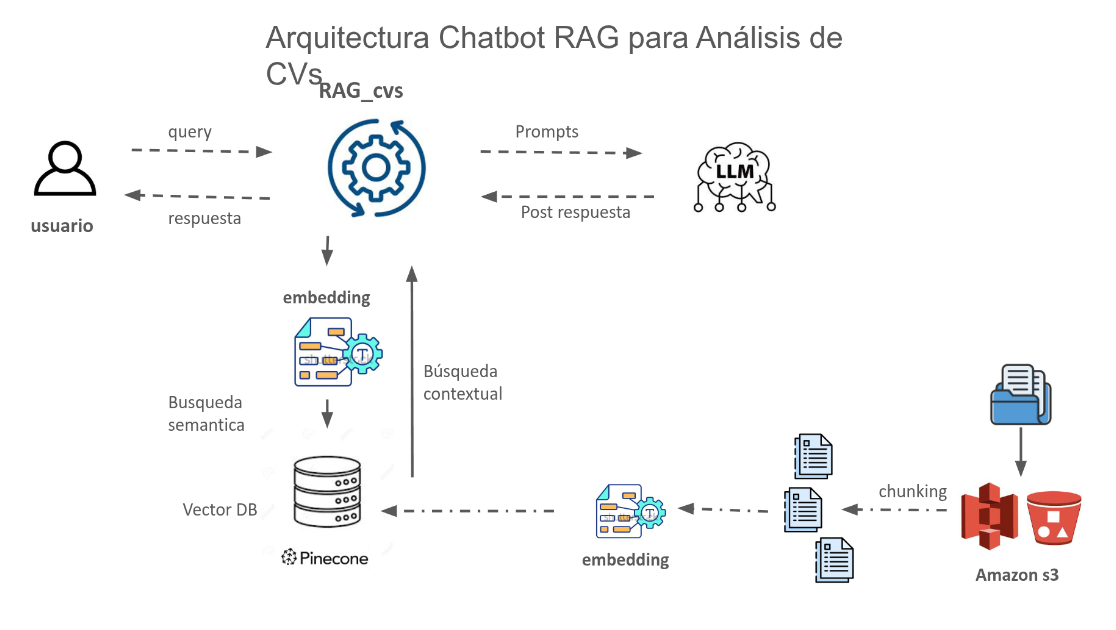

This project implements an enterprise-grade RAG (Retrieval-Augmented Generation) chatbot that combines large language models with external knowledge bases. The system processes PDF documents, creates semantic embeddings, and provides accurate, context-aware responses by retrieving relevant information before generating answers.

System Architecture

Core Components:

- Cloud Storage: PDF documents storaage in S3 bucket of AWS(cloud storage)

- Document Processing: PDF parsing and intelligent text chunking

- Embedding Generation: Semantic vector representations using OpenAI/Ollama

- Vector Storage: Pinecone cloud database for scalable similarity search

- Retrieval System: Top-K similarity search with metadata filtering

- LLM Integration: LLaMA 3 or GPT-4 for response generation

- Prompt Engineering: Custom prompts for context-aware responses

- Interactive UI: easy to use interface for users to interact with Gradio

RAG Pipeline:

Technical Implementation

Technology Stack:

- Vector Database: Pinecone (1536-dim vectors, cosine similarity)

- LLM Options: LLaMA 3 (via Ollama) or GPT-4.1-mini

- Framework: LangChain for RAG orchestration

- Document Processing: PyMuPDF for PDF text extraction

Key Features:

- Multi-document support with cross-document retrieval

- Conversation memory for context-aware interactions

- Source citations linking to specific pages/sections

- Hybrid search with metadata filtering

Deployment

Infrastructure:

- FastAPI for REST API

- Docker containerization

- Kubernetes deployment on AWS EKS

- Redis caching for performance optimization

Performance Metrics:

- <3 seconds end-to-end response time

- 100+ queries per second throughput

- <2% hallucination rate (thanks to RAG grounding)

Technology Stack

you can checkout ![]() my github repository for further details, and sorry againt this may be a bit mess and in spanish but it is a demo project used as MVP(minimal viable product).

my github repository for further details, and sorry againt this may be a bit mess and in spanish but it is a demo project used as MVP(minimal viable product).

This project demonstrates expertise in RAG systems, scalable vector databasesPinecone, and production AI deployment(using docker + kubernetes), and monitoring of KPIs(key Performance Indicators). For collaboration opportunities or more clarifications, please contact me.